Merge https://github.com/google/adk-python/pull/2857 Adds support for invoking Gemma models via the Gemini API endpoint. To support agentic function, callbacks are added which can extract and transform function calls and responses into user and model messages in the history. This change is intended to allow developers to explore the use of Gemma models for agentic purposes without requiring local deployment of the models. This should ease the burden of experimentation and testing for developers. A basic "hello world" style agent example is provided to demonstrate proper functioning of Gemma 3 models inside an Agent container, using the dice roll + prime check framework of similar examples for other models. ## Testing ### Testing Plan - add and run integration and unit tests - manual run of example `multi_tool_agent` from quickstart using new `Gemma` model - manual run of `hello_world_gemma` agent ### Automated Test Results: | Test Command | Results | |----------------|---------| | pytest ./tests/unittests | 4386 passed, 2849 warnings in 58.43s | | pytest ./tests/unittests/models/test_google_llm.py | 100 passed, 4 warnings in 5.83s | | pytest ./tests/integration/models/test_google_llm.py | 5 passed, 2 warnings in 3.73s | ### Manual Testing Here is a log of `multi_tool_agent` run with locally-built wheel and using Gemma model. ``` ❯ adk run multi_tool_agent Log setup complete: /var/folders/bg/_133c0ds2kb7cn699cpmmh_h0061bp/T/agents_log/agent.20250904_152617.log To access latest log: tail -F /var/folders/bg/_133c0ds2kb7cn699cpmmh_h0061bp/T/agents_log/agent.latest.log /Users/<redacted>/venvs/adk-quickstart/lib/python3.11/site-packages/google/adk/cli/cli.py:143: UserWarning: [EXPERIMENTAL] InMemoryCredentialService: This feature is experimental and may change or be removed in future versions without notice. It may introduce breaking changes at any time. credential_service = InMemoryCredentialService() /Users/<redacted>/venvs/adk-quickstart/lib/python3.11/site-packages/google/adk/auth/credential_service/in_memory_credential_service.py:33: UserWarning: [EXPERIMENTAL] BaseCredentialService: This feature is experimental and may change or be removed in future versions without notice. It may introduce breaking changes at any time. super().__init__() Running agent weather_time_agent, type exit to exit. [user]: what's the weather like today? [weather_time_agent]: Which city are you asking about? [user]: new york [weather_time_agent]: OK. The weather in New York is sunny with a temperature of 25 degrees Celsius (77 degrees Fahrenheit). ``` And here is a snippet of a log generated with DEBUG level logging of the `hello_world_gemma` sample. It demonstrates how function calls are extracted and inserted based on Gemma model interactions: ``` ... 2025-09-04 15:32:41,708 - DEBUG - google_llm.py:138 - LLM Request: ----------------------------------------------------------- System Instruction: None ----------------------------------------------------------- Contents: {"parts":[{"text":"\n You roll dice and answer questions about the outcome of the dice rolls.\n You can roll dice of different sizes...\n"}],"role":"user"} {"parts":[{"text":"Hi, introduce yourself."}],"role":"user"} {"parts":[{"text":"Hello! I am data_processing_agent, a hello world agent that can roll many-sided dice and check if numbers are prime. I'm ready to assist you with those tasks. Let's begin!\n\n\n\n"}],"role":"model"} {"parts":[{"text":"Roll a die with 100 sides and check if it is prime"}],"role":"user"} {"parts":[{"text":"{\"args\":{\"sides\":100},\"name\":\"roll_die\"}"}],"role":"model"} {"parts":[{"text":"Invoking tool `roll_die` produced: `{\"result\": 82}`."}],"role":"user"} {"parts":[{"text":"{\"args\":{\"nums\":[82]},\"name\":\"check_prime\"}"}],"role":"model"} {"parts":[{"text":"Invoking tool `check_prime` produced: `{\"result\": \"No prime numbers found.\"}`."}],"role":"user"} {"parts":[{"text":"The die roll was 82, and it is not a prime number.\n\n\n\n"}],"role":"model"} {"parts":[{"text":"Roll it again."}],"role":"user"} ----------------------------------------------------------- Functions: ----------------------------------------------------------- 2025-09-04 15:32:41,708 - INFO - models.py:8165 - AFC is enabled with max remote calls: 10. 2025-09-04 15:32:42,693 - INFO - google_llm.py:180 - Response received from the model. 2025-09-04 15:32:42,693 - DEBUG - google_llm.py:181 - LLM Response: ----------------------------------------------------------- Text: {"args":{"sides":100},"name":"roll_die"} ----------------------------------------------------------- ... ``` COPYBARA_INTEGRATE_REVIEW=https://github.com/google/adk-python/pull/2857 from douglas-reid:add-gemma-via-api e6d015f6a9ccbcf20ef7a7af8e4bbe1e9a5936b6 PiperOrigin-RevId: 816451001

Agent Development Kit (ADK)

<html>An open-source, code-first Python toolkit for building, evaluating, and deploying sophisticated AI agents with flexibility and control.

Important Links: Docs, Samples, Java ADK & ADK Web.

</html>Agent Development Kit (ADK) is a flexible and modular framework for developing and deploying AI agents. While optimized for Gemini and the Google ecosystem, ADK is model-agnostic, deployment-agnostic, and is built for compatibility with other frameworks. ADK was designed to make agent development feel more like software development, to make it easier for developers to create, deploy, and orchestrate agentic architectures that range from simple tasks to complex workflows.

🔥 What's new

-

Agent Config: Build agents without code. Check out the Agent Config feature.

-

Tool Confirmation: A tool confirmation flow(HITL) that can guard tool execution with explicit confirmation and custom input

✨ Key Features

-

Rich Tool Ecosystem: Utilize pre-built tools, custom functions, OpenAPI specs, or integrate existing tools to give agents diverse capabilities, all for tight integration with the Google ecosystem.

-

Code-First Development: Define agent logic, tools, and orchestration directly in Python for ultimate flexibility, testability, and versioning.

-

Modular Multi-Agent Systems: Design scalable applications by composing multiple specialized agents into flexible hierarchies.

-

Deploy Anywhere: Easily containerize and deploy agents on Cloud Run or scale seamlessly with Vertex AI Agent Engine.

🤖 Agent2Agent (A2A) Protocol and ADK Integration

For remote agent-to-agent communication, ADK integrates with the A2A protocol. See this example for how they can work together.

🚀 Installation

Stable Release (Recommended)

You can install the latest stable version of ADK using pip:

pip install google-adk

The release cadence is roughly bi-weekly.

This version is recommended for most users as it represents the most recent official release.

Development Version

Bug fixes and new features are merged into the main branch on GitHub first. If you need access to changes that haven't been included in an official PyPI release yet, you can install directly from the main branch:

pip install git+https://github.com/google/adk-python.git@main

Note: The development version is built directly from the latest code commits. While it includes the newest fixes and features, it may also contain experimental changes or bugs not present in the stable release. Use it primarily for testing upcoming changes or accessing critical fixes before they are officially released.

📚 Documentation

Explore the full documentation for detailed guides on building, evaluating, and deploying agents:

🏁 Feature Highlight

Define a single agent:

from google.adk.agents import Agent

from google.adk.tools import google_search

root_agent = Agent(

name="search_assistant",

model="gemini-2.5-flash", # Or your preferred Gemini model

instruction="You are a helpful assistant. Answer user questions using Google Search when needed.",

description="An assistant that can search the web.",

tools=[google_search]

)

Define a multi-agent system:

Define a multi-agent system with coordinator agent, greeter agent, and task execution agent. Then ADK engine and the model will guide the agents works together to accomplish the task.

from google.adk.agents import LlmAgent, BaseAgent

# Define individual agents

greeter = LlmAgent(name="greeter", model="gemini-2.5-flash", ...)

task_executor = LlmAgent(name="task_executor", model="gemini-2.5-flash", ...)

# Create parent agent and assign children via sub_agents

coordinator = LlmAgent(

name="Coordinator",

model="gemini-2.5-flash",

description="I coordinate greetings and tasks.",

sub_agents=[ # Assign sub_agents here

greeter,

task_executor

]

)

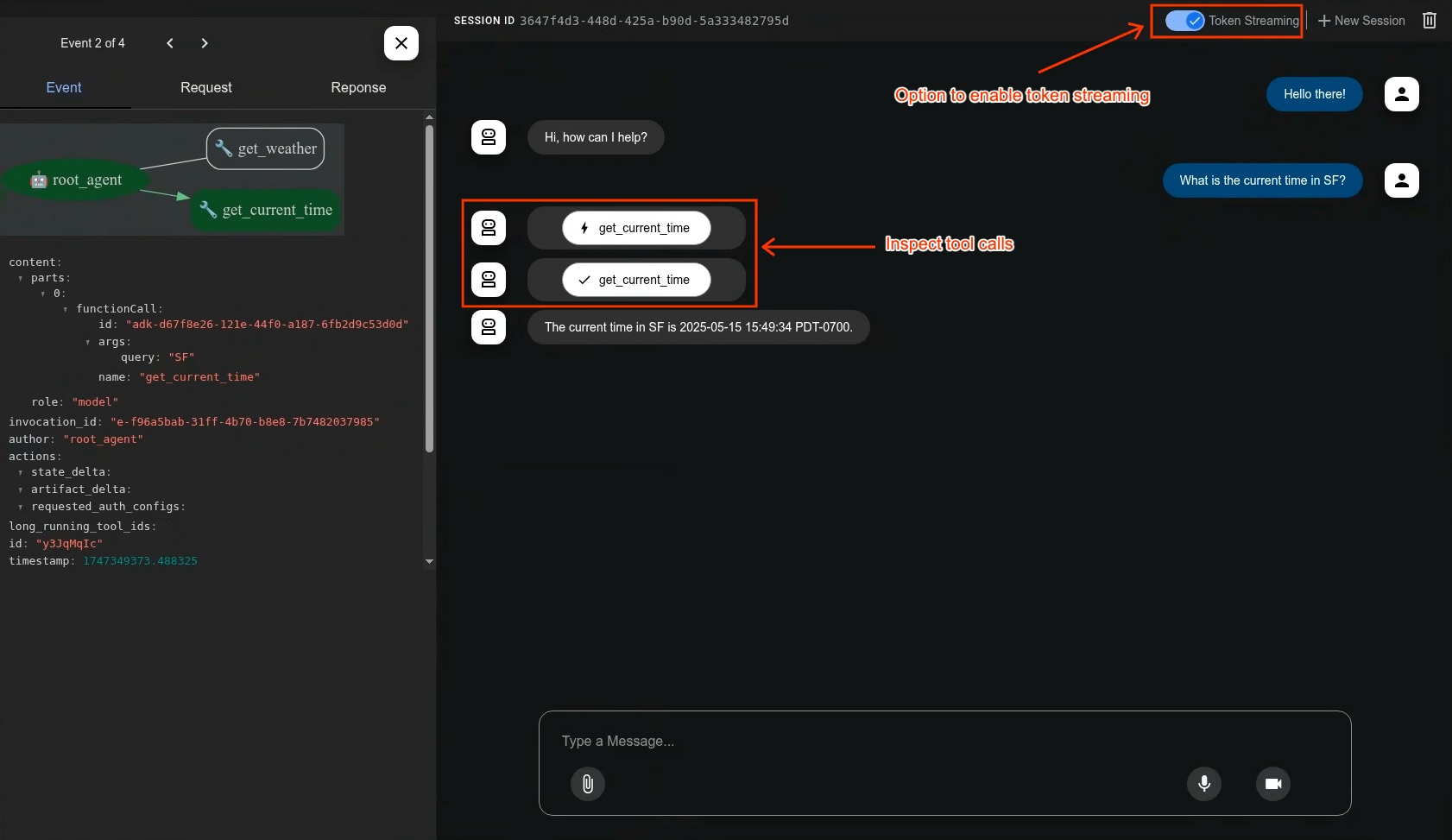

Development UI

A built-in development UI to help you test, evaluate, debug, and showcase your agent(s).

Evaluate Agents

adk eval \

samples_for_testing/hello_world \

samples_for_testing/hello_world/hello_world_eval_set_001.evalset.json

🤝 Contributing

We welcome contributions from the community! Whether it's bug reports, feature requests, documentation improvements, or code contributions, please see our

- General contribution guideline and flow.

- Then if you want to contribute code, please read Code Contributing Guidelines to get started.

Vibe Coding

If you are to develop agent via vibe coding the llms.txt and the llms-full.txt can be used as context to LLM. While the former one is a summarized one and the later one has the full information in case your LLM has big enough context window.

📄 License

This project is licensed under the Apache 2.0 License - see the LICENSE file for details.

Happy Agent Building!